4 Indian researchers in US develop unique tech to prevent ID leaks during AI photo editing – all about ‘PrivateEdit’

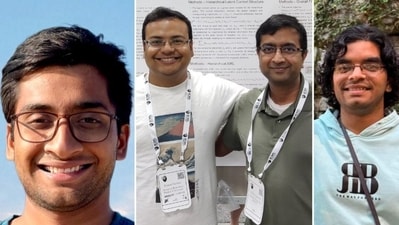

A team of Indian researchers has developed a patent-pending technology to prevent identity leaks during AI photo editing. The system was spearheaded by Dipesh Tamboli, Vaneet Aggarwal, and Vineet Punyamoorty of Purdue University, who developed the core architecture. They were joined by technical collaborator Atharv Pawar from the University of Michigan to co-author the research, creating a pipeline that secures personal images before they are uploaded to third-party AI platforms.

The researchers opened up about the project in a conversation with HindustanTimes.com. Speaking about the inspiration behind the project, Dipesh explained that it “came from a specific moment in early 2025 when AI “Ghibli-style” filters went viral.”

The viral trend of Ghibli-style portraits took social media by storm last week, with Netizens asking AI to modify their pictures into a distinct anime style. While many loved OpenAI’s new feature, some slammed the trend as “disrespectful” to Studio Ghibli co-founder Hayao Miyazaki, who previously criticised AI-generated animation as “an insult to life itself” and said he “would never wish to incorporate this technology into my work at all.”

The inspiration behind the technology and why it is unique

“Millions were uploading personal photos to transform themselves into cartoons, but at the same time, governments – including the Indian government – were issuing urgent warnings about the risks of uploading biometric data to third-party servers,” Dipesh, a doctoral alumnus, told HindustanTimes.com. “It was a massive “privacy tax”: to use these creative tools, you had to surrender your face. I realized that once high-resolution biometric data is uploaded, users lose all control over it. I started thinking: how can we get these amazing AI results without the personal information leak? That question led to PrivateEdit.”

With the new technology on its way, one may wonder what it has to offer that has not been seen before. Dipesh had an answer to that.

Read More | OpenAI, Anthropic, Google unite to combat AI model copying in China

“Most privacy tools today are “reactive” – they try to fix the problem after your data has been sent. PrivateEdit is “Privacy by Design.” We’ve introduced a way to “decouple” your identity from the rest of the image. What’s truly new is that our tech works with the big AI models you already use – like Midjourney or ChatGPT – without them needing to change a thing. We also introduced a “Trust Slider” that gives the power back to the user; you can decide exactly how much information to hide based on how much you trust a specific platform. It’s personalized protection that hasn’t existed until now,” he explained.

How does the technology work?

Vineet, a doctoral candidate in computer and electrical engineering, explained in detail how the technology works.

“We developed a pipeline that acts like a “secure filter” between you and the AI. Instead of sending your whole photo to the cloud, our system works locally on your device first,” he told HindustanTimes.com. “It uses advanced segmentation to find the “identity-sensitive” parts of your face—the unique markers that make you you—and puts a digital mask over them. We then send only the “background” and the masked version to the AI. The AI performs the edits you requested, and then the photo is sent back to your device, where your real facial details are safely re-inserted. The AI gets the job done, but it never actually “sees” the real you.”

One may wonder if the technology is only for tech experts, or if regular smartphone users can use it too. Dipesh said that they were determined not to make this just a “lab experiment,” and stressed that it is user-friendly.

“The goal is for this to feel like a standard photo editing app. You don’t need to know how AI works or what “segmentation” is; you just use a simple slider to choose your privacy level, and the app handles the complex “masking” and “reconstruction” in the background. Privacy shouldn’t be a chore; it should be as easy as applying a filter,” he said.

Major privacy risks associated with AI editing tools

Vaneet, a University Faculty Scholar and the Reilly Professor of Industrial Engineering with courtesy appointments in the Department of Computer Science and the Elmore Family School of Electrical and Computer Engineering, said that the primary risk is Data Persistence and Function Creep.

“Many users assume their photo is deleted once the “filter” is applied, but often that data becomes part of a permanent digital footprint used for surveillance, profiling, or training future models without explicit consent. In the current landscape, your biometric identity is being harvested as a commodity. Moving toward “Privacy-by-Design” frameworks like the one we’ve developed is essential to ensure that the AI revolution doesn’t come at the cost of fundamental human autonomy,” explained Vaneet, in whose research group both Dipesh and Vineet worked.

Atharv, the technical collaborator, explained what risks can be reduced with the Purdue researchers’ new technology.

“When you upload raw photos, they can be stored indefinitely, leaked in a server breach, or even used to train “deepfakes” without your permission. By using our masking system, sensitive data is never even transmitted to the cloud. It also helps companies; they can now offer AI photo tools to their customers without the massive legal and ethical liability of storing thousands of people’s private facial data,” he said.

Atharv also claimed that this technology can be used by big companies like Adobe, Apple, or Google, calling it “the ideal future for this tech.”

“Because our pipeline doesn’t require companies to change their existing AI models, it can be integrated into current apps as a “Privacy Layer.” It would allow these big tech companies to provide amazing generative features while proudly telling their users: “We never even see your raw photos.” It’s a win-win for both the company’s reputation and the user’s safety,” he explained.

How this research impacts the future of AI regulations and laws

Vaneet noted that governments across the world are struggling with how to regulate AI.

“Most laws focus on what companies do after they have your data. Our work provides a technical path for “data minimization”—a key principle in privacy laws like GDPR. By proving that we can get high-quality results without collecting sensitive data in the first place, we are providing a blueprint for how future AI regulations should be written,” he explained.

Vineet revealed that the biggest challenge was posed by the fact that masking the face makes the final AI-edited photo look fake, or affects its quality.

“If you mask too much, the AI loses context and the photo looks weird. If you mask too little, you leak privacy. We developed a “smart blending” technique that gives the AI just enough information to understand the lighting and shadows of the scene without seeing your actual biometric features. The result is a high-quality, professional-looking image where the “seams” between your real face and the AI’s edits are completely invisible,” he said.

Meanwhile, Dipesh said that one thing people must remember when they use AI tools in the future is that “innovation does not have to come at the cost of your identity.”

“For a long time, we’ve been told that to get the best tech, we have to give up our data. Our research proves that isn’t true. You can have the world’s most powerful AI and your privacy, too. You should never have to choose between being creative and being safe,” he concluded, adding that the next big step is Verifiable Data Sovereignty.

“It’s not enough for a company to promise they won’t use your data; we need technical systems where a user can mathematically verify that their data was used only for the task they asked for and then immediately deleted. Combining this with on-device processing will be the key to an AI world where innovation and personal safety aren’t at odds,” said Dipesh.