The geometry of power: Algorithmic sovereignty in the 21st Century

In 2023, the United States (US) imposed sweeping export controls to restrict China’s access to advanced AI chips. Months later, China accelerated domestic semiconductor investment at a scale reminiscent of Cold War industrial policy and tightened controls over gallium and germanium – minerals essential to chip production.

Meanwhile, generative AI systems began drafting legal briefs, diagnosing cancers, writing production-grade code, and shaping political narratives across democracies and autocracies alike.

advertisement

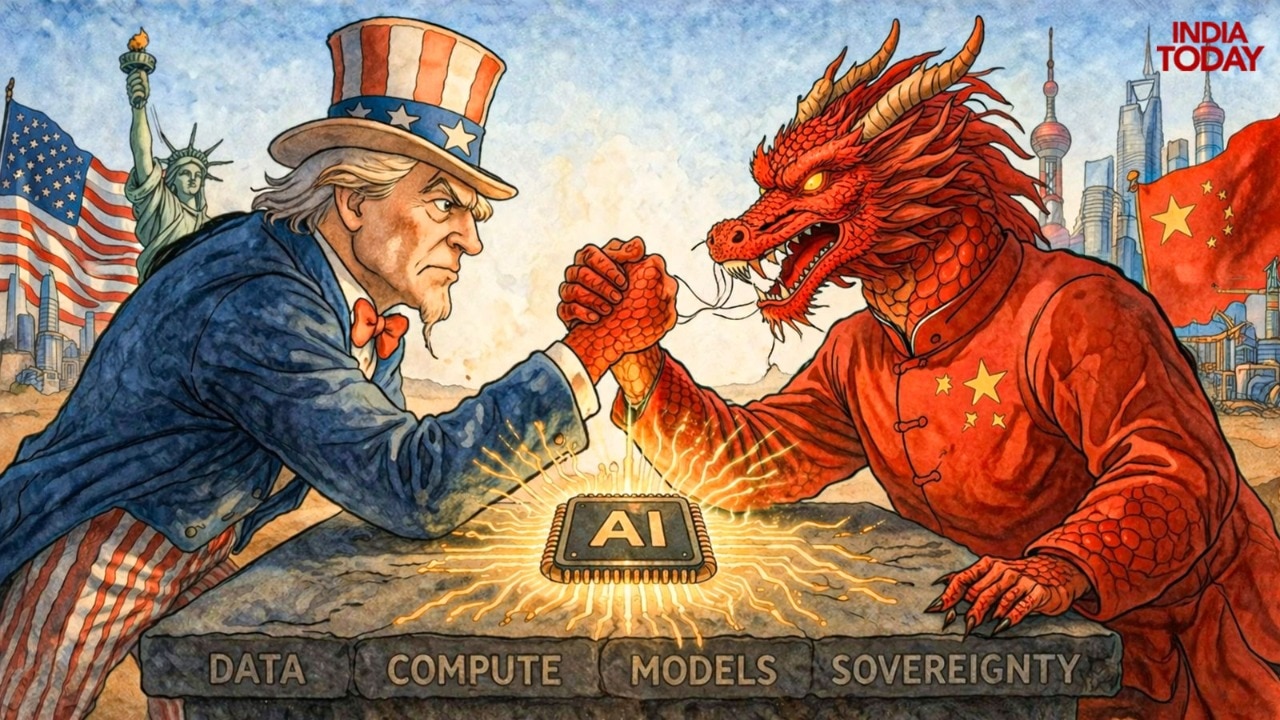

Every epoch in human history has had its equation of power. For the maritime empires of the 18th century, it was a simple function: hulls multiplied by cannons, projected across sea lanes. For the industrial states of the 19th, it became coal plus steel, raised to the power of mechanised labour. In the 20th century, the equation condensed into a stark symbol: E = mc. The variables of the 21st century are data (D), compute (C), and models (M). Increasingly, power resembles the function: P = f(D C M).

We are living through a civilisational transition towards algorithmic sovereignty. This does not imply digital autarky. Nor is it the withdrawal from global technological exchange. Rather, it denotes the capacity of a political community to train and deploy advanced AI systems. The algorithmic state will derive authority from its capacity to predict, model, and optimise traffic flows, credit markets, missile trajectories, and public sentiment. If oil was the combustible substrate of the past generation, data is the probabilistic substrate of the 21st.

But data alone is inert. Like crude oil beneath desert sands, it acquires value only when refined. The refinery of the AI age is made up of three essential layers. The first is semiconductor fabrication, particularly the ability to produce advanced chips required for large-scale AI model training. The second is computing infrastructure, which includes high-performance data centres and cloud architectures capable of handling frontier workloads. The third is frontier model development itself: a neural network trained across billions of parameters, minimising loss functions through gradient descent, converging asymptotically toward prediction where each forward pass is a multiplication of matrices; each backward pass, a correction of error. What appears to the user as effortless cognition is, in reality, a symphony of linear algebra executed at planetary scale. States that command these layers will shape strategic hierarchies.

The emerging competition around AI resembles earlier technological rivalries, but with a critical difference. Nuclear weapons, for all their destructive capacity, were tightly controlled by states and confined to military doctrine. AI permeates everything – from financial systems and industrial production to intelligence gathering and battlefield decision-making. It is both infrastructure and weapon, both economic engine and instrument of statecraft. Whereas the nuclear age forced humanity to grapple with mutually assured destruction, the algorithmic age forces us to confront mutually assured acceleration.

Three distinct models of sovereign AI development have begun to crystallise.

The first is American: decentralised, venture-driven, and architected around private innovation, yet increasingly aligned with national strategy. Its strength lies in layered network effects and an alliance system that extends from Silicon Valley to Seoul. The CHIPS and Science Act channels tens of billions of dollars into domestic semiconductor fabrication. Defence agencies fund frontier AI research through DARPA, the Air Force Research Laboratory, and the Chief Digital and AI Office. Private firms such as OpenAI, Google DeepMind, and Anthropic drive model development at scale.

Hyperscale cloud providers like Amazon, Microsoft, and Google supply the computational backbone. Research universities such as Stanford and MIT sustain foundational talent pipelines. The state might not own the algorithm but it shapes incentives, restricts exports, funds foundational research, and mobilises private actors in moments of strategic urgency. Sovereignty here is diffused yet coordinated.

The second is Chinese: vertically integrated, state-directed, and fused with political authority. AI development is inseparable from industrial policy and civil-military fusion. The ‘New Generation AI Development Plan’ explicitly identifies AI as a core strategic industry. Provincial governments provide subsidies for chip fabrication plants. Firms such as Baidu, Alibaba, Tencent, and Huawei develop large language models integrated directly into state services. Surveillance systems and computer vision platforms operate within a political architecture that prioritises control and stability. Breakthroughs in reinforcement learning or autonomous systems move fluidly from civilian labs to military doctrine. This is sovereign AI as a centralised command; infrastructural depth aligned tightly with state power.

The third, most clearly articulated in Europe, locates sovereignty in regulation rather than scale. Without commanding the deepest pools of venture capital or the most advanced semiconductor ecosystems, European states have sought influence through normative power – crafting comprehensive frameworks on data governance and AI safety that aim to shape global markets. EU’s AI Act establishes risk-based classifications and compliance obligations. The General Data Protection Regulation (GDPR) reshaped data governance norms by default. In this conception, control over standards becomes a strategic asset.

Between these poles lies a fourth possibility, still contested, where nations like India must navigate and define their own digital destinies.

India, with its digital public infrastructure, demonstrates an ability to build state-enabled platforms at continental scale without full state ownership of innovation. The question is whether this model can scale upward into frontier AI capabilities, or whether India will remain dependent on foundational models and semiconductor supply chains controlled elsewhere.

First, for India, the stakes are structural. With one of the world’s largest digital populations and a deep software talent base, the country possesses significant data capital. Yet, scale without compute is like potential without realisation. Advanced semiconductor fabrication remains conspicuous by its absence. High-performance computing clusters demand sustained capital investment. Research ecosystems require continuity measured in decades and insulation from electoral cycles.

Second, India’s challenge is linguistic. With 22 major languages and hundreds of dialects, frontier AI systems trained predominantly on English-language corpora risk encoding structural linguistic hierarchies. Algorithmic sovereignty for India may therefore require foundational models trained deeply in Indic languages, thus embedding pluralism into the architecture itself.

Third, the deeper question is whether a democracy of India’s scale can sustain long-horizon technological investment without succumbing to regulatory volatility or policy discontinuity. Algorithmic sovereignty in India may ultimately hinge less on engineering capacity than on institutional stability.

Fourth, AI also confronts us with the delegation of cognition itself. When autonomous systems make consequential decisions, accountability cannot be outsourced to code. For India, a civilisation marked by diversity, pluralism, and deep traditions of ethical reasoning, the governance of AI cannot rely entirely on imported normative templates. What values are embedded in the algorithms that shape public life? Here, India has the opportunity to articulate a distinctive framework for governing intelligent machines – one that lies at the heart of democratic legitimacy and morality.

Fifth, there is also a geopolitical opportunity for India. As supply chains fragment between American and Chinese blocs, India could position itself as a neutral compute and model-development hub.

Without these pillars, India risks becoming a mere consumer rather than a creator. The historical analogy may be instructive. In earlier centuries, states that failed to master the dominant technologies of the era often found themselves subordinated to those that did. Industrialisation divided the world between producers and dependents; the nuclear age separated deterrent powers from the strategically vulnerable. AI may produce a similar hierarchy.

A caveat is in order: absolute self-sufficiency is neither realistic nor desirable. Modern technological systems are inherently interdependent. The strategic objective is narrower but more demanding: control over critical layers that determine vulnerability. Autonomy at the margins is insufficient if dependence persists at the core. This is why debates about digital regulation, data governance, and technological partnerships are matters of grand strategy. The capacity to develop sovereign AI systems — trained on domestic data, operating on national infrastructure, and aligned with local ethical frameworks — will increasingly define political autonomy.

In this new geometry, the decisive advantage will belong to those who can align mathematics with strategy and infrastructure with imagination. And in that quiet convergence, the balance of power will be recalibrated.

(Pranav Jain is an IPS officer currently undergoing training as part of his probation. Pranav Sharma, currently on probation, is an IFS officer.)

– Ends